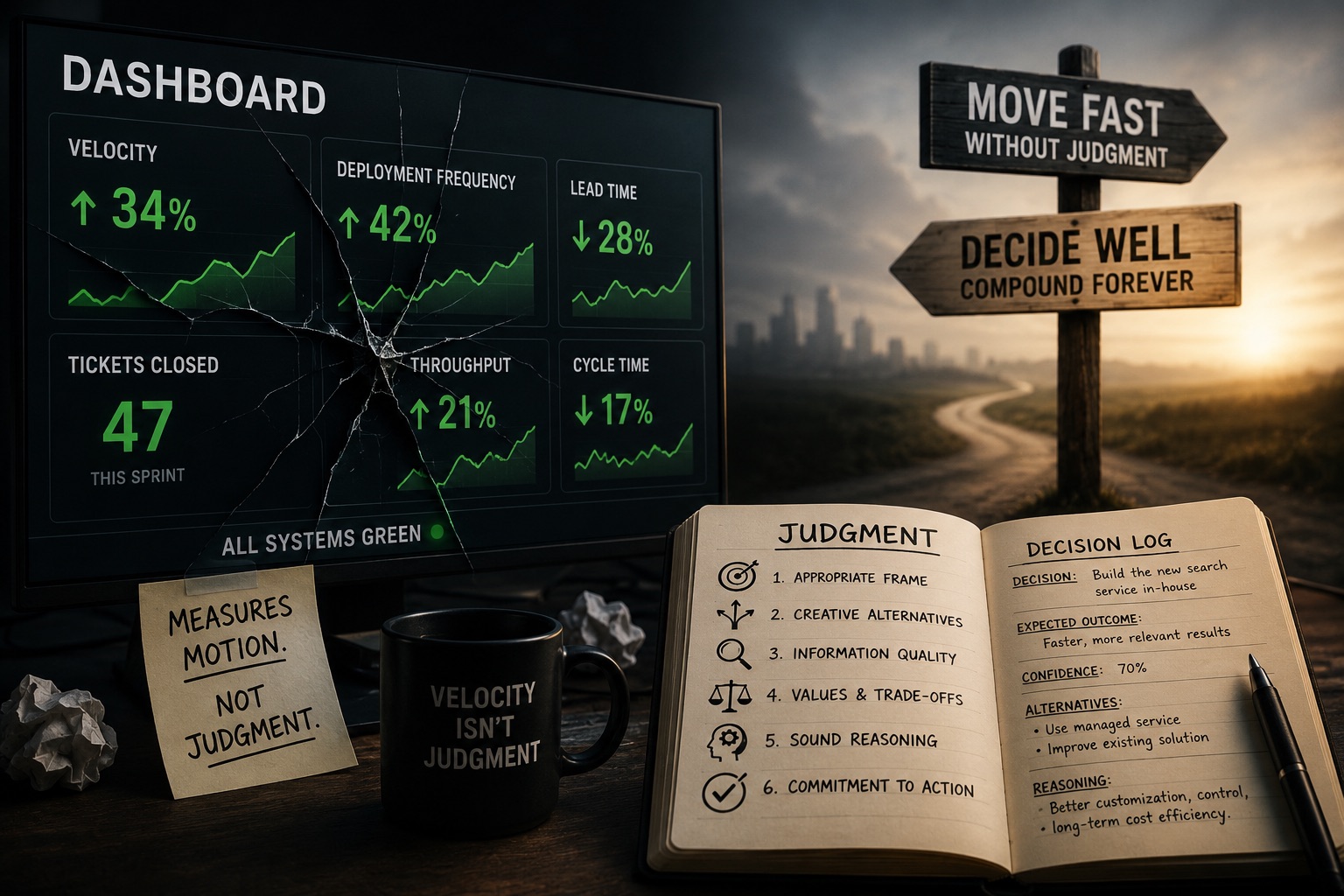

Your team closed 47 tickets last sprint. Deployment frequency is up. Lead time is down. The sprint review looked clean.

And you have no idea whether your team is getting better at what matters.

Every metric on that dashboard measures motion. None of them measures whether the team made the right decision. In an era where AI generates the first draft of almost everything, code, architecture proposals, incident summaries, and product specs, that gap is no longer academic. It is the difference between a team that compounds in judgment and a team that accelerates toward the wrong destination.

This is the hardest management question in an AI-accelerated organization: how do you measure judgment? Here is what the research says, what it still can’t answer, and what a judgment-healthy team actually looks like in practice.

Why Current Metrics Fail

I have never trusted vanity metrics. Lines of code per engineer, tickets closed per sprint, velocity charts that go up and to the right, these numbers tell you something happened. They tell you nothing about whether it was the right thing, decided well, for the right reasons. I said this to skeptical managers for years and mostly lost the argument, because the dashboard was right there, and judgment was not.

The research has caught up.

A consistent finding across 2024–2026 practitioner literature is that velocity, story points, lines of code, and even most DORA metrics measure output rather than the quality of the reasoning that produced it. Stack Overflow’s Beyond Speed essay (May 2025) makes the point directly: when velocity becomes the target, teams stop making good decisions and start optimizing for the number. Developers, and their managers, end up optimizing for the wrong thing." Engineering Quadrant (2025) makes the structural point: velocity is a useful forecasting tool and a dangerous scorecard. Same number. Two completely different uses. Most teams use it as the scorecard.

The AI era has made this failure mode visible in a way it wasn’t before.

The 2025 DORA State of AI-Assisted Software Development report, with ~5,000 respondents, offers the clearest indictment from within the measurement establishment itself. AI adoption jumped 14 points in a single year, hitting 90%. Throughput metrics improved. And yet instability remained elevated, and 30% of developers still reported little to no trust in the code AI generated. The report’s own conclusion: “Simple delivery metrics aren’t enough, they tell you what is happening but not why it’s happening.”

That sentence is DORA admitting that DORA metrics are insufficient. Worth pausing on.

The report’s central reframe: AI is a mirror and a multiplier. It accelerates whatever the organization already is. High-functioning teams get faster. Dysfunctional teams get worse faster. If your measurement system only captures speed, you will not see the multiplier working against you until the damage is done.

Faros AI’s telemetry analysis of 10,000+ developers (July 2025) makes the paradox concrete. Teams with high AI adoption completed 21% more tasks and merged 98% more pull requests. PR review time went up 91%. Context switching went up 47%. Aggregate DORA metrics were flat or worse. The work did not disappear. It moved into review queues, into integration failures, into the places where human judgment is required to catch what the AI got wrong.

More tasks. More PRs. More review burden. No improvement in delivery stability. That is not a productivity story. That is a judgment displacement story.

The Problem Is Measuring Outcomes Instead of Reasoning

The failure of activity metrics is well understood. The deeper problem is less discussed: even when teams do look beyond velocity, they almost always evaluate the outcome rather than the decision that produced it.

Annie Duke calls this “resulting.” The error is treating the quality of a decision as though it can be read from the quality of its outcome. A good decision can fail. A bad decision can succeed. If you only review wins and losses, you are training your team to be lucky, not good. Most retrospectives are structured backward, the outcome is already in the room when the reasoning is first examined.

This is not a process failure. It is a structural one. The outcome is known before the retrospective begins, and it shapes every interpretation that follows. The team that shipped the incident blames the wrong variable. The team that hit the deadline celebrates the wrong decision. Neither one gets better at deciding.

The research literature on agile retrospectives at scale is blunt about this. Studies find no standard measurement of whether retrospective outcomes were ever achieved. Teams run retrospectives, identify action items, and have no mechanism to return to them. The ritual exists. The learning is not guaranteed.

What the AI era demands is a shift from measuring what happened to measuring how the team reasoned before anyone knew what would happen.

What Good Decision-Making Actually Looks Like

Carl Spetzler’s decision quality framework, formalized in Decision Quality (Spetzler, Winter, Meyer, Wiley, 2016), gives the clearest structural answer to that question. A decision is only as strong as its weakest element across six dimensions:

- Appropriate frame - did the team define the right problem?

- Creative alternatives - did the team generate genuine options, or rationalize a predetermined answer?

- Relevant and reliable information - was the reasoning grounded, or overfit to available data?

- Clear values and trade-offs - did the team know what it was optimizing for?

- Sound reasoning - did the conclusion follow from the inputs?

- Commitment to action - was the decision actually made, not deferred?

This is the closest thing to a rubric for judgment. A team that consistently frames problems narrowly, skips alternatives, and calls the first reasonable answer “the decision” has a judgment problem that no velocity chart will surface. But a pre-decision review against these six elements would surface it immediately.

The decision journal tradition, developed by Shane Parrish at Farnam Street, drawing on Kahneman, operationalizes this as a written artifact. Before the outcome is known, you record: the situation, the decision, the expected outcome, your confidence level, the alternatives you rejected, and your reasoning. Weeks or months later, you return to it. The outcome cannot retroactively rewrite what you believed and why.

Atlassian, Farnam Street, and multiple decision-science practitioners converge on the same finding: writing down the reasoning before the outcome is known is the mechanism that makes learning possible. Without it, hindsight bias fills every gap.

This is the direct ancestor of the Judgment Log in JDD. Not a post-mortem. Not a retro. A pre-commitment to what you believed, written when you believed it, available for review when reality arrives.

Documenting Decisions Is Not Governing Them

Architecture Decision Records were introduced by Michael Nygard in 2011 and have since been adopted by AWS, Google Cloud, Microsoft Azure, and ThoughtWorks. The standard structure, context, decision, alternatives considered, and consequences, captures the reasoning in the moment. AWS’s 2024 architecture blog, reporting on ADR practice across projects with 200+ records and teams of 10 to 100+ engineers, identifies the primary payoff: decisions no longer get relitigated, onboarding gets faster, and an audit trail exists.

That is real value. It is not enough.

ReflectRally’s 2025 analysis of ADR implementations identifies the common failure: “Documenting decisions is not the same as governing them.” Teams write ADRs. Nobody reviews them against outcomes. The reasoning gets captured and then abandoned. The artifact exists without the practice.

This is the gap that matters. Documentation without review is a filing system. It preserves the past. It does not improve the future.

Governing decisions means returning to them. It means asking, when the outcome is known, whether the reasoning was sound. Was the frame right? Which of Spetzler’s six elements was weakest? Did we call the confidence level accurately? It means treating past decisions as data about your team’s judgment quality, not as history to explain, but as evidence to update from.

The Judgment Log only works as a measurement instrument if the team closes the loop. Writing it is the start. Reviewing it is the practice.

What You Actually Measure

This is where the field gets honest about its limits. There is no four-metric dashboard for judgment the way DORA defined four metrics for delivery. The research supports a three-layer model.

Calibration - are we right as often as we think we are?

Philip Tetlock’s Good Judgment Project ran a multi-year forecasting tournament through IARPA. Trained amateur forecasters outperformed intelligence analysts with access to classified information by roughly 30%. Training in probabilistic thinking improved forecast accuracy by approximately 60%. The measurement instrument is the Brier score, a numerical measure of how well stated confidence matches actual accuracy over time. A team that says it is 80% confident and is right 80% of the time is well-calibrated. A team that says 80% and is right 50% of the time has a measurable judgment problem.

You do not need to score every decision. You need to score a consistent sample over time and watch whether the gap between stated confidence and actual accuracy narrows or widens. That trend line is a judgment metric.

Here is what this looks like in practice. At the start of a sprint, the team identifies two or three significant decisions, a build vs. buy call, a database schema choice, a decision to defer a refactor. For each, the lead writes one sentence: what they expect to happen, and their confidence level as a percentage. Six weeks later, in retro, they pull the log. Three decisions, three predictions, three outcomes. Did the team that said it was 90% confident on the schema choice get it right? If that happens consistently, 90% confidence, 60% accuracy, the team is systematically overconfident. If 70% confidence tracks to 70% accuracy over a quarter, the team is calibrated. The Brier score formalizes this mathematically, but even informal tracking over eight to ten decisions starts to show the pattern. The point is not precision. The point is that “we think we’re right” stops being an assertion and becomes a testable claim.

Process quality - did the reasoning meet the bar before the outcome was known?

Spetzler’s six elements become a pre-decision rubric. Which element was weakest on this call? Score it at the time of decision, not retrospectively. Aggregate the scores across a quarter. A team that consistently scores low on “creative alternatives” has an identifiable, improvable pattern of judgment. This is a leading indicator, it tells you something about the next decision, not just the last one.

The practical version: before any decision above a certain threshold, call it anything that would take more than two weeks to reverse, the team spends fifteen minutes scoring it against Spetzler’s six elements. Not a full workshop. A structured conversation. The tech lead asks: do we have the frame right, or are we solving the wrong problem? Did we actually generate alternatives, or did we rationalize the answer we already had? What are we optimizing for, and does everyone agree? The scoring is a 1–3 on each element. A decision with a 1 on “creative alternatives” does not get approved until someone generates at least two genuine options. A decision with a 1 on “appropriate frame” goes back for reframing. After a quarter of doing this, the scores cluster. Most teams discover they consistently shortcut the same element, usually alternatives or values. That pattern is the leading indicator. Fix the pattern, and you improve decisions that haven’t happened yet.

Governance health - are we closing the loop?

Two lagging metrics worth tracking.

Decision reversal rate: how often does the team relitigate a decision it has already made and documented? High reversal rate signals either poor original reasoning or a failure to capture context well enough to defend the call later. Both are judgment signals.

Tracking it is simple. Count the significant decisions your team logged in a quarter. Count how many were reopened and relitigated within ninety days. Divide. A 10–15% reversal rate is noise, context changes, new information arrives, some revisiting is healthy. A 30%+ reversal rate means either the original decisions weren’t real or the reasoning wasn’t captured well enough to hold. Neither is a process problem. Both are judgment problems.

Decision-to-outcome gap: when you return to a logged decision and compare the reasoning to what happened, how often was the reasoning sound despite an adverse outcome? This operationalizes Duke’s “resulting” correction. A team that reasons well and gets unlucky looks different in the log from a team that reasoned carelessly and got lucky. Over time, the pattern distinguishes skill from noise.

The practice is straightforward. At the end of each quarter, the team reviews every logged decision from twelve weeks prior. For each one, two questions: was the outcome good or bad? Was the reasoning sound at the time? A good outcome from bad reasoning is not a win, it is a near-miss that taught the team nothing. A bad outcome from sound reasoning is not a failure, it is information about the environment. The team that can make that distinction consistently is building judgment. The team that celebrates every good outcome and blames every bad one on bad luck is not.

None of these is as clean as deployment frequency. That is the point. The work of building a judgment-healthy team does not resolve into a single number. It resolves into a practice, the discipline of writing down what you believe before you know whether you are right, reviewing it when you find out, and letting the pattern tell you how your team actually decides.

What a Judgment-Healthy Team Looks Like

The metrics give you a signal. The behaviors are what you actually observe.

A judgment-healthy team disagrees before it decides and commits after. The disagreement is substantive, alternatives are named, trade-offs are argued, and the frame gets challenged. Then the decision gets made, and the team moves. Relitigating after the fact is rare because the reasoning was captured and the commitment was real.

A judgment-healthy team runs retrospectives that look at decisions, not just outcomes. It asks which calls from six weeks ago have landed and which haven’t, and compares those outcomes with the team’s predictions. It treats the delta as information.

A judgment-healthy team can articulate what it decided not to do and why. Rejected alternatives are not forgotten. They are documented. When a rejected path resurfaces six months later, the team can explain the original reasoning and update it with new information rather than starting over.

A judgment-declining team looks different. Velocity is high. Retros are positive. And every quarter, there is a different crisis, an architectural decision that nobody remembers making, a third-party dependency that nobody evaluated, a product direction that three people thought was decided and two people thought was still open. The activity metrics look fine until the accumulated decisions compound into something visible.

The gap between the two shows up most clearly in two moments. The first is when a rejected alternative resurfaces. The judgment-healthy team opens the log, reads the original reasoning, and says: that was right then, here is what has changed, here is whether we should reconsider. The judgment-declining team starts the debate from scratch, relitigates what was already decided, and wastes the hours it already spent on the first round. The second moment is the post-incident review. The judgment-healthy team can separate what was knowable from what wasn’t. The judgment-declining team retrofits the narrative to the outcome and walks away with a false lesson.

DORA 2025 is right: AI amplifies what is already there. A team with strong judgment discipline accelerates. A team without it moves faster toward the wrong place. The measurement system you build now determines which one you have.

The Cheapest Code in 2026 Is the Code Nobody Had to Think About

AI has made execution cheap. The cost of generating a solution has dropped close to zero. The cost of generating the wrong solution at scale with confidence has not changed.

Calibration. Process quality. Governance health. Three layers. One practice: write what you believe before you know whether you are right, return to it when you find out, and let the pattern tell you how your team actually decides.

The dashboard will keep showing green. The question is whether the green means anything.