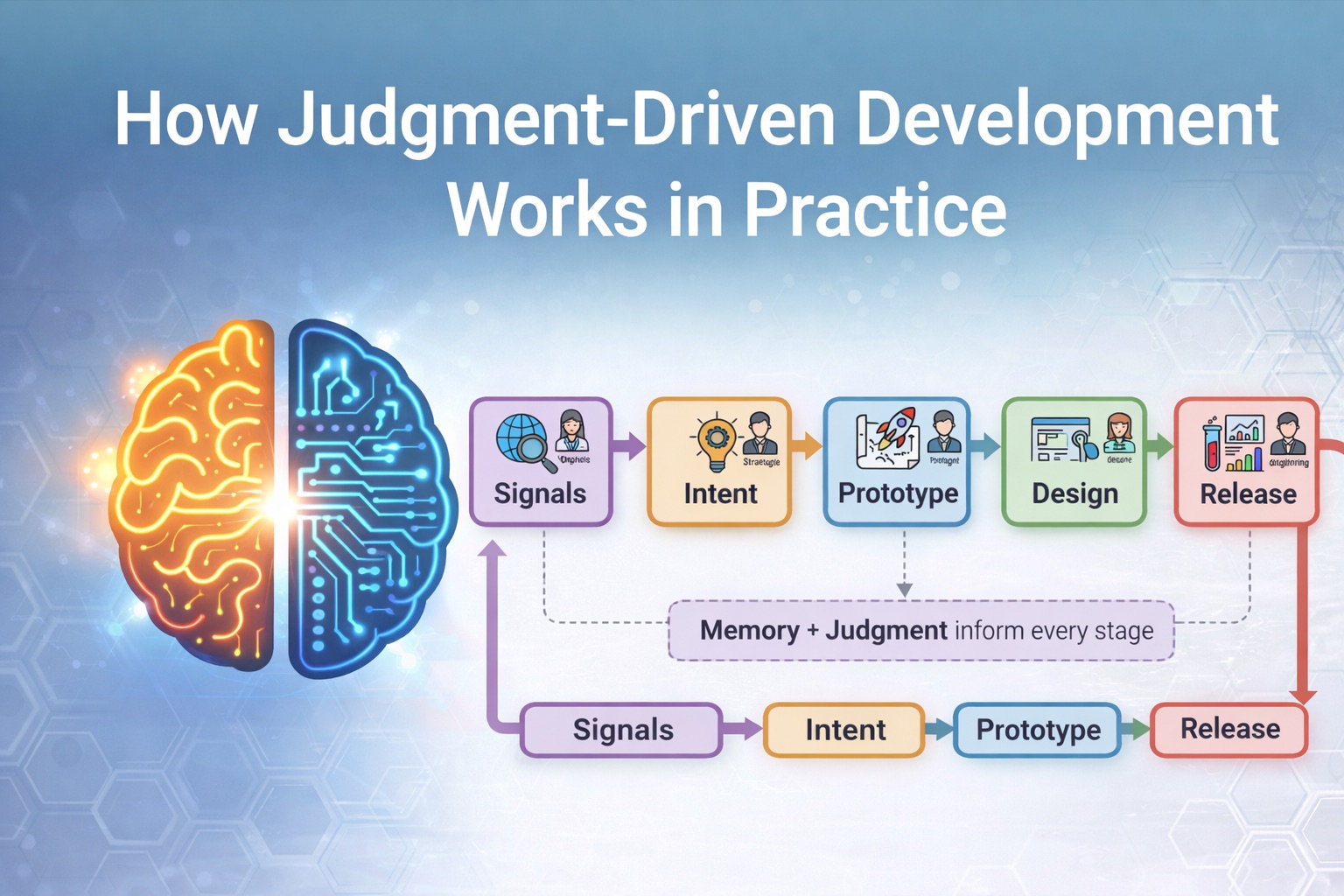

How Judgment-Driven Development Works in Practice

In the previous post, I argued that defining decision boundaries is necessary if we want judgment to survive in an AI-accelerated development environment. When execution becomes cheap, the number of decisions explodes. Without clarity around who decides what, speed simply amplifies risk.

But defining boundaries alone is not enough.

The next question is more practical: how does work actually flow through those boundaries? How does an idea move from a conversation with a customer to something running in production, while AI accelerates the process without collapsing responsibility across roles?

Judgment-Driven Development does not replace the existing team structure. Product managers, designers, engineers, DevOps, and security roles still exist. What changes is how quickly each role can move and how tightly they can collaborate.

AI becomes an accelerator embedded in every stage, while judgment remains with the humans responsible for the consequences.

To understand how this works, it helps to start with the beginning of the cycle.

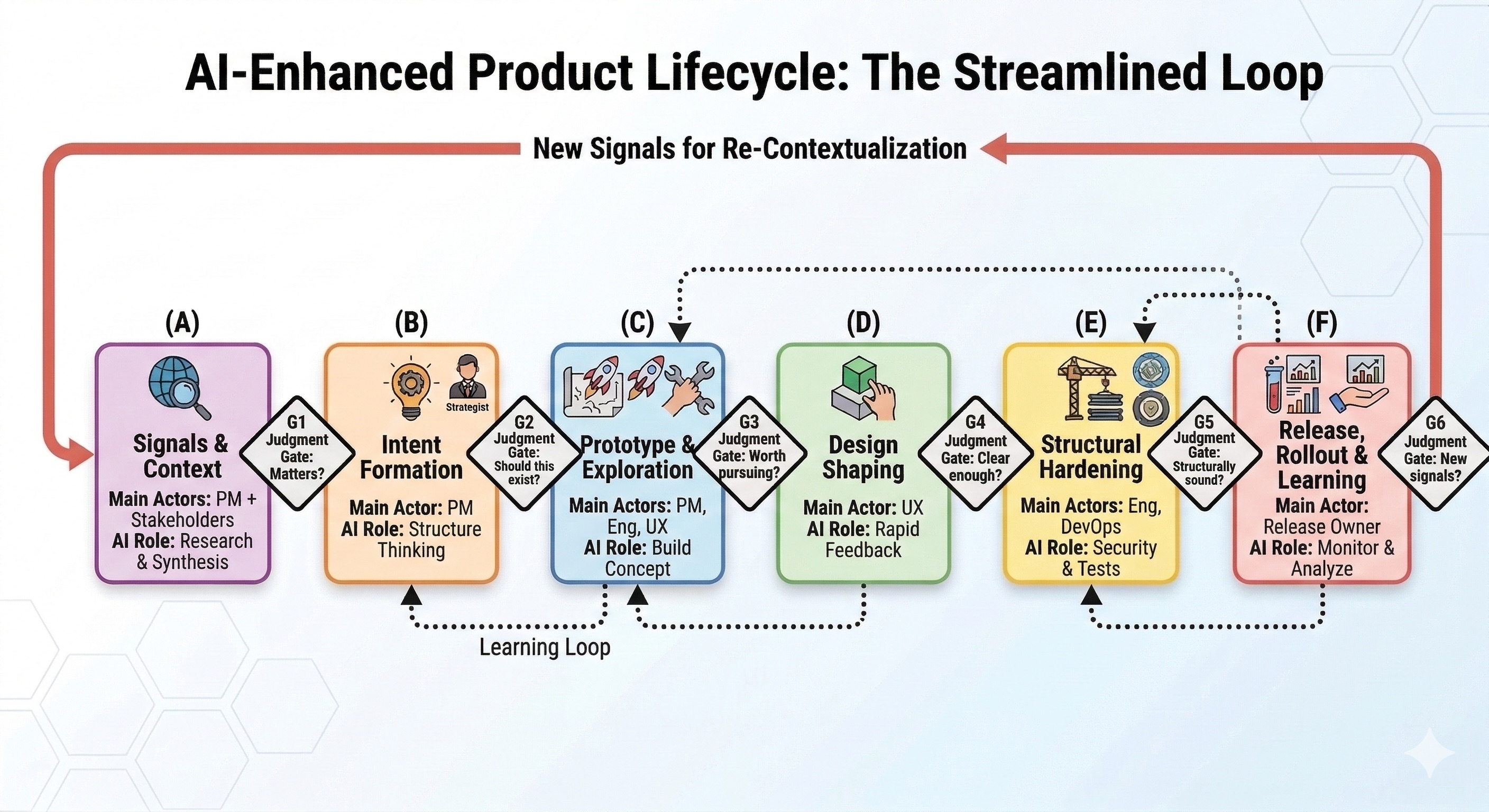

Where Ideas Actually Start

Most product ideas do not start with a feature request.

They begin as signals.

Some signals come directly from customers. A recurring complaint. A support ticket that keeps appearing. A sales conversation that reveals a gap.

Others come from usage data. A workflow that users keep trying to bend in unintended ways. A feature that nobody touches. A pattern emerging in telemetry.

But many important signals come from outside the product itself. New regulations that change compliance requirements. A new technology that enables something that was previously impractical. A shift in how organizations operate. Sometimes, even a new way of thinking about a problem.

The product manager sits at the center of this signal environment. Conversations with customers, discussions with internal stakeholders, notes in systems like Salesforce, Jira, or Slack threads, all of these pieces gradually add up to a picture of where the system might need to evolve.

AI becomes useful here not as a decision-maker, but as a research and synthesis partner. It can act almost like a tireless research intern, quickly producing deep explorations of a topic, summarizing new regulations, mapping competitive landscapes, or explaining emerging technologies that might affect the product.

At the same time, it can cluster related signals, surface similar historical discussions, and highlight contradictions in assumptions. Instead of spending days collecting and summarizing information, the PM can explore the landscape much faster and more broadly.

But AI still does not decide which signals matter.

That remains a human judgment.

Turning Signals Into Intent

Once enough signals point in a direction, the next step is not to build. It is clarifying intent.

This is where AI becomes a thinking partner.

Instead of immediately writing a formal document, the product manager can begin by pouring raw ideas, research, and collected signals into AI and using it to sharpen the thinking. The model can challenge assumptions, propose alternative framings, and clarify the argument.

From that process, the PM can distill the idea into a structured form. Sometimes this becomes a PRFAQ. Sometimes a lightweight PRD. The exact format matters less than the clarity of the intent.

If the idea is large or strategic, this document becomes something that can be shared with others to align thinking. If the change is small, the process can move quickly into prototyping.

The key point is that intent becomes explicit before momentum builds around implementation.

Rapid Prototyping

Once intent is clear enough, AI dramatically shortens the distance between thinking and building.

Instead of waiting for weeks of development before seeing something real, the PM can generate a working prototype directly from the specification. Sometimes it is a backend flow. Sometimes it includes a simple UI. Sometimes it is just enough code to demonstrate behavior.

The goal is not production quality.

The goal is to turn ideas into artifacts that people can react to.

What used to take weeks can often happen in hours. The prototype becomes a shared object that others can explore and critique, rather than debating abstract descriptions.

This stage still lives inside a sandbox. These are reversible decisions. Two-way doors.

Design Shaping and Early Feedback

If the concept includes user interaction, design now becomes deeply involved.

Instead of receiving a written description of what might exist someday, designers can work directly with a living prototype. They can reshape the flows, simplify interactions, and apply their experience to improve the system’s behavior.

AI also assists heavily at this stage. It can generate layout variations, interaction structures, and visual components.

But design judgment determines which options survive.

Because the system is already interactive, feedback loops become much faster. Prototypes can be shared internally to gather quick reactions. They can also be tested externally through rapid user testing tools to observe how real users interact with the idea.

What used to take weeks of design cycles can now happen in days.

Structural Hardening

Once the concept proves promising, the system moves into a different phase. Exploration gives way to structural responsibility.

This is where engineering judgment becomes critical.

Developers are not simply reviewing generated code. They are turning a prototype into something that can survive in production. That means examining security implications, aligning with system architecture, improving performance, and ensuring the system can be observed and maintained.

AI helps here as well, often through specialized agents. Some tools focus on security scanning. Others analyze performance patterns, validate dependencies, or generate tests.

Each of these agents accelerates a specific dimension of system hardening.

But the responsibility for deciding whether the system is structurally sound remains with engineers.

This stage is where ideas become systems.

Controlled Release and Experimentation

Delivery does not always mean a full release.

One advantage of AI-accelerated development is that experimentation becomes easier. Features can be deployed behind flags. Multiple variants can be tested through A/B experiments. Different approaches can run in parallel while data reveal which one works better.

Instead of committing to a single path early, the system can explore multiple possibilities in parallel.

Those experiments generate new signals. Usage patterns, experiment results, and operational data all feed back into the signal layer.

Which brings us to the most important characteristic of this model.

This Is a Cycle, Not a Line

The development flow may appear sequential when described step by step, but in practice, it behaves more like a loop.

Signals shape intent. Intent becomes prototypes. Prototypes evolve through design and engineering. Releases generate new signals.

And the cycle continues.

The Real Shift

The important shift here is not that AI replaces roles.

It amplifies them.

Product managers can move from ideas to working artifacts much faster. Designers can explore interaction spaces more deeply. Engineers can harden systems more efficiently. DevOps and security teams can enforce structural safety with stronger tooling.

Each role becomes an enhanced version of itself.

What remains constant is judgment. Each role still owns decisions inside its domain.

AI compresses execution. Humans retain accountability.

Which is exactly the balance that Judgment-Driven Development is trying to protect.